Iterative Development:Installation Building Process

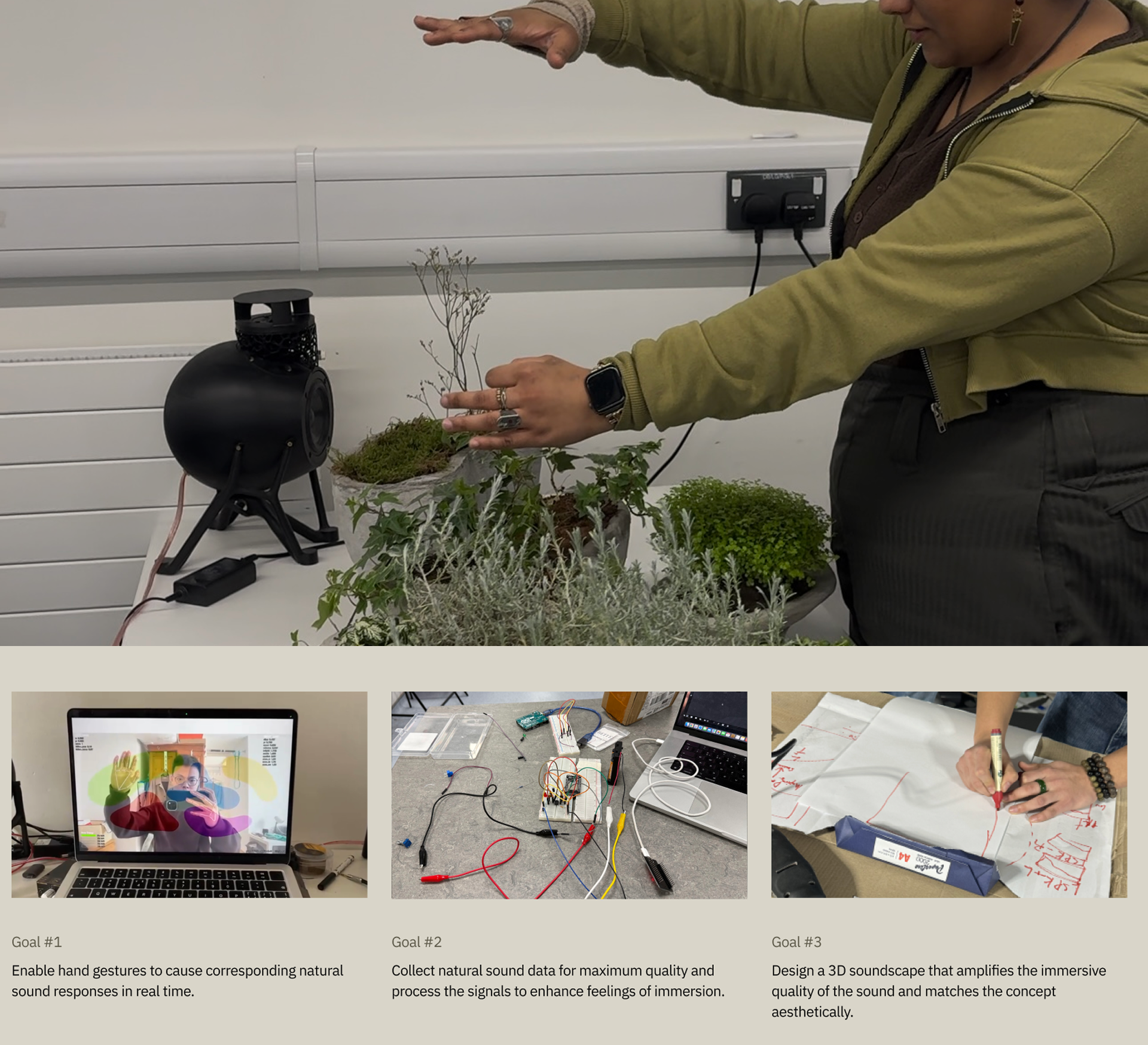

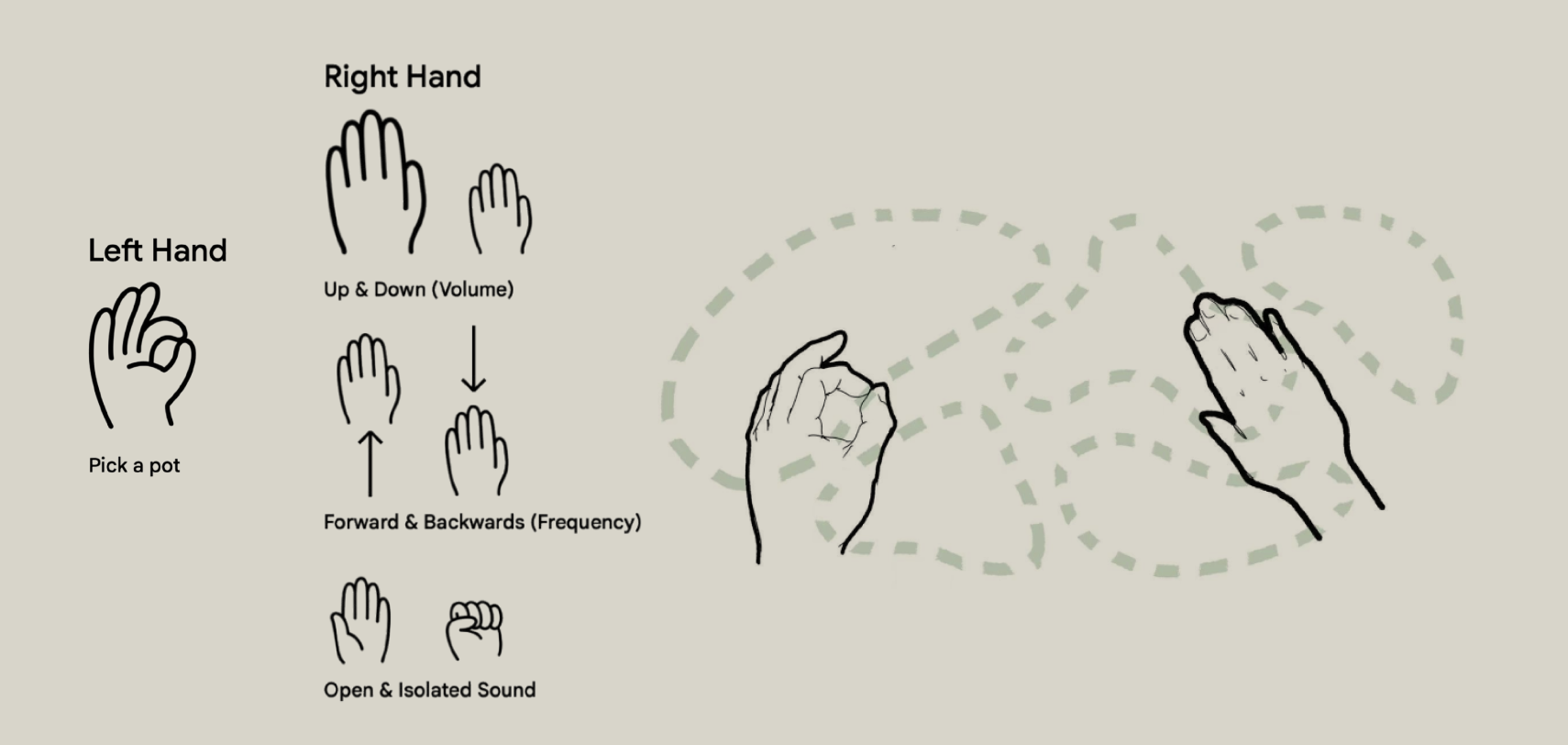

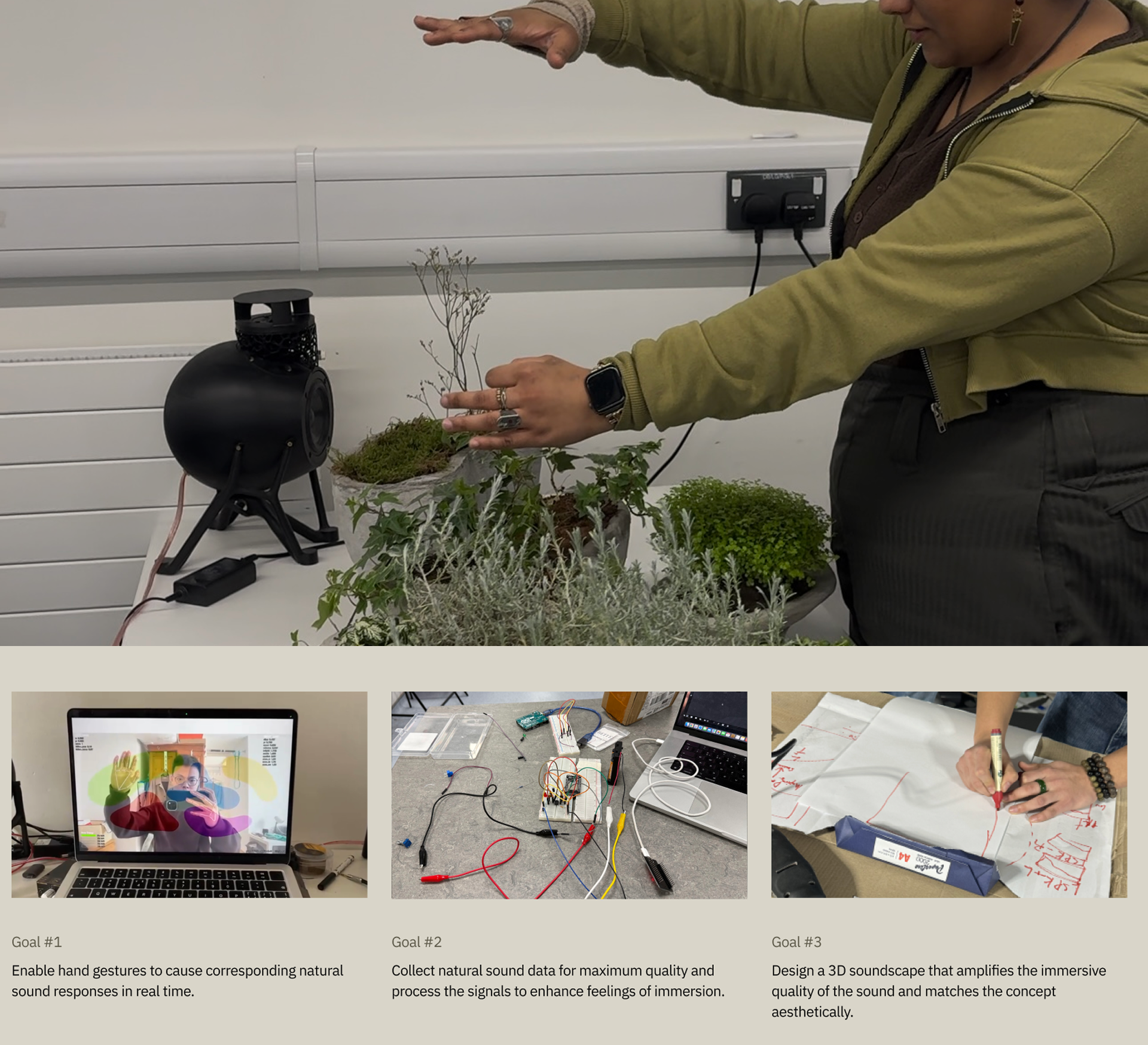

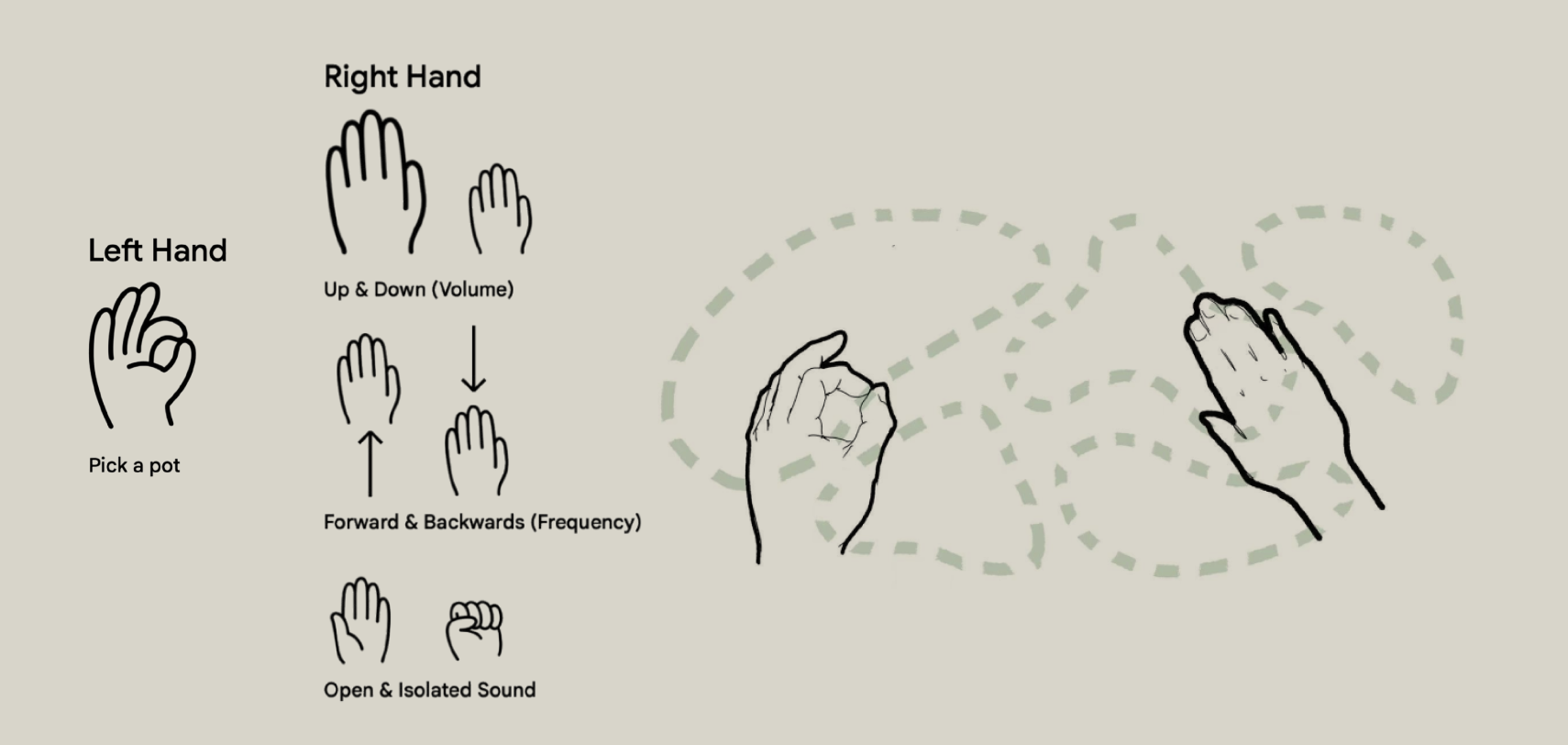

Iterative Development:Gesture Selection

To make the installation accessible without prior instructions, we drew inspiration from orchestra conductors. We mapped complex audio parameters directly to natural hand movements, ensuring that the bodily interaction felt immediately intuitive to the participants.

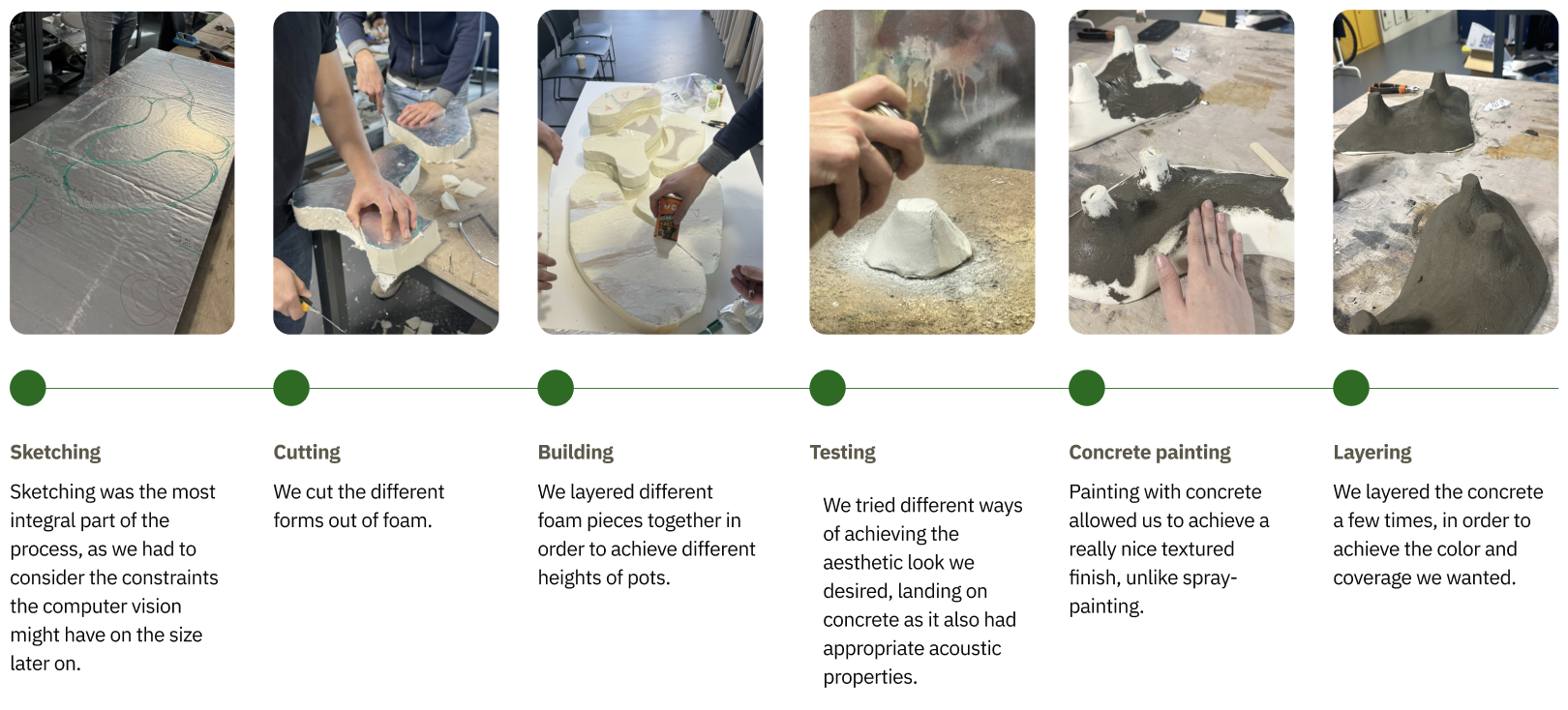

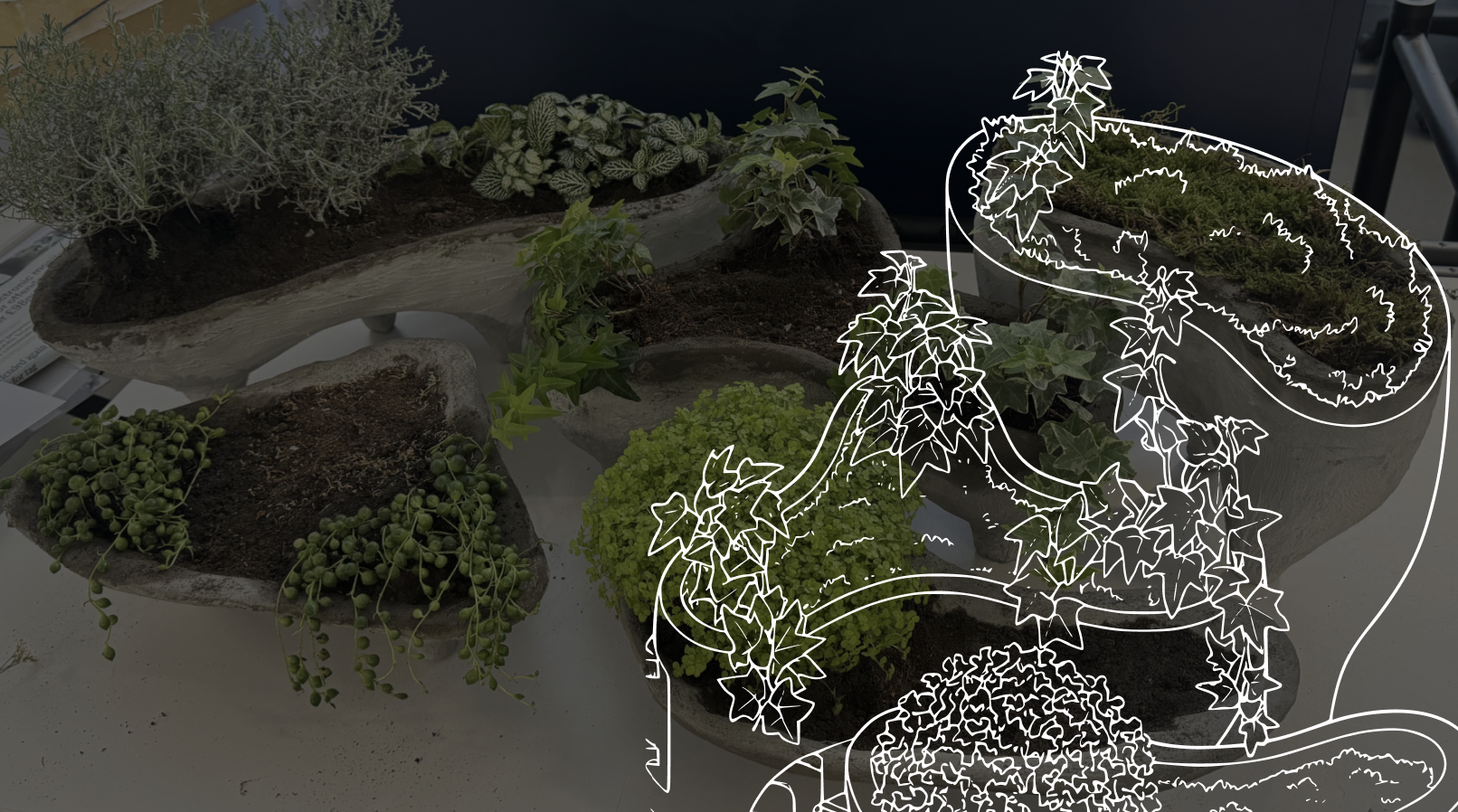

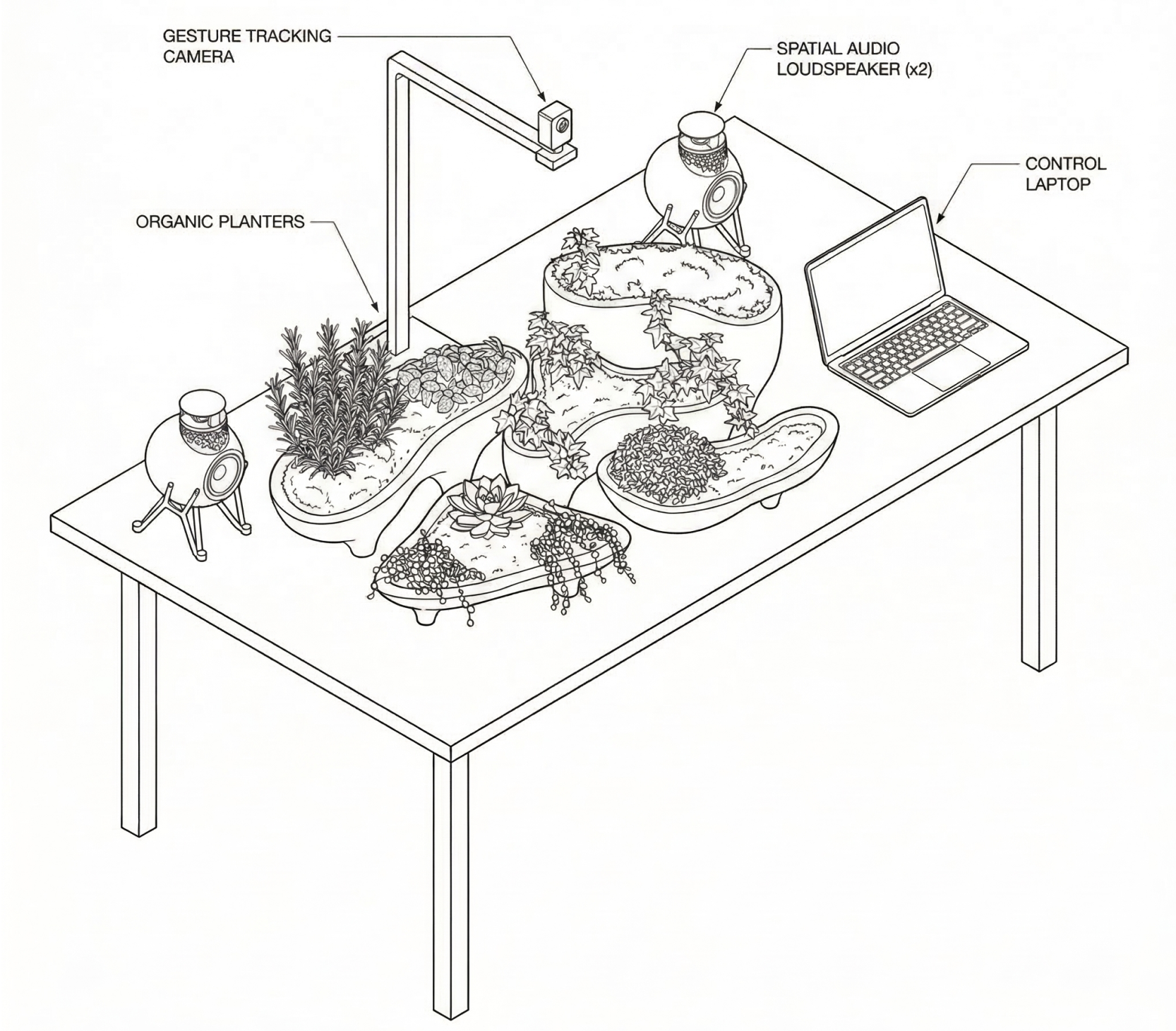

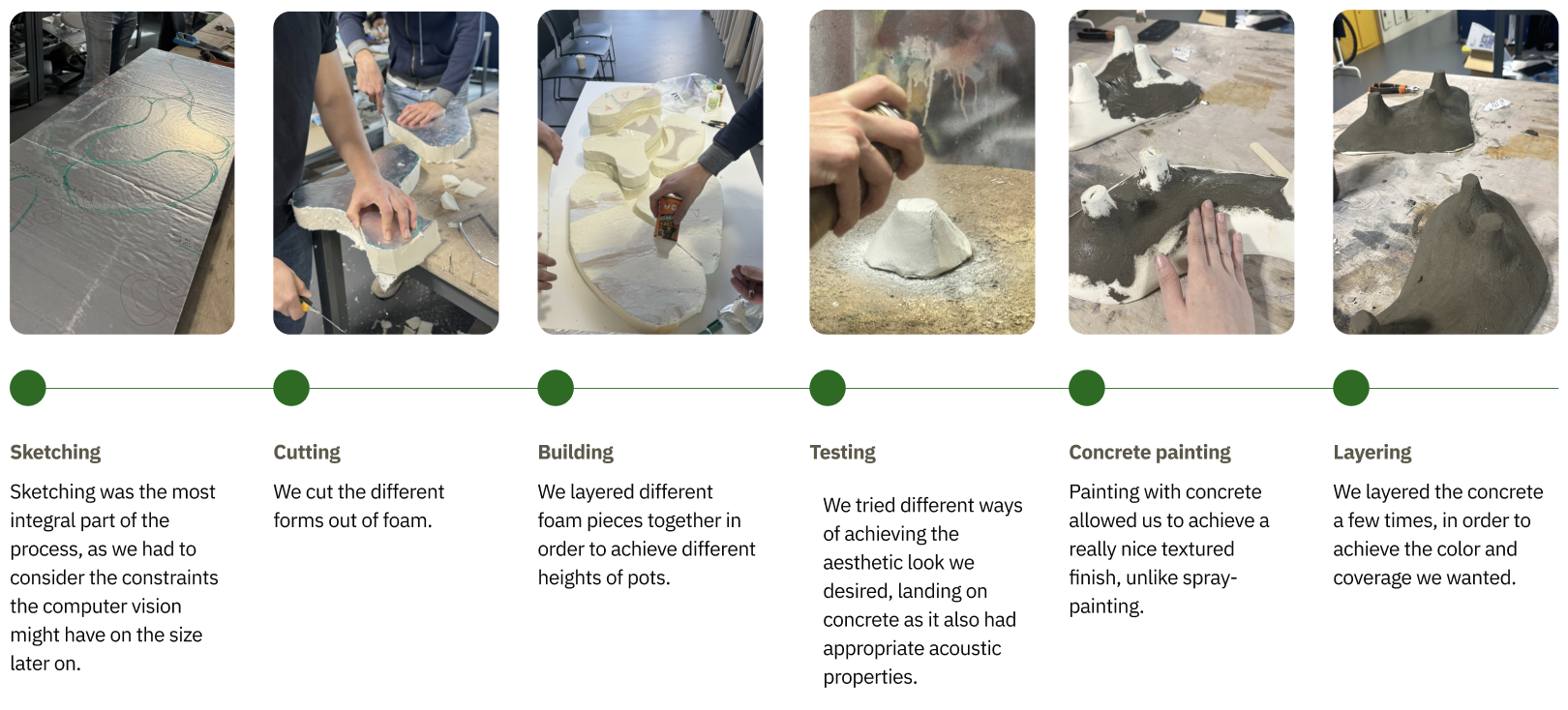

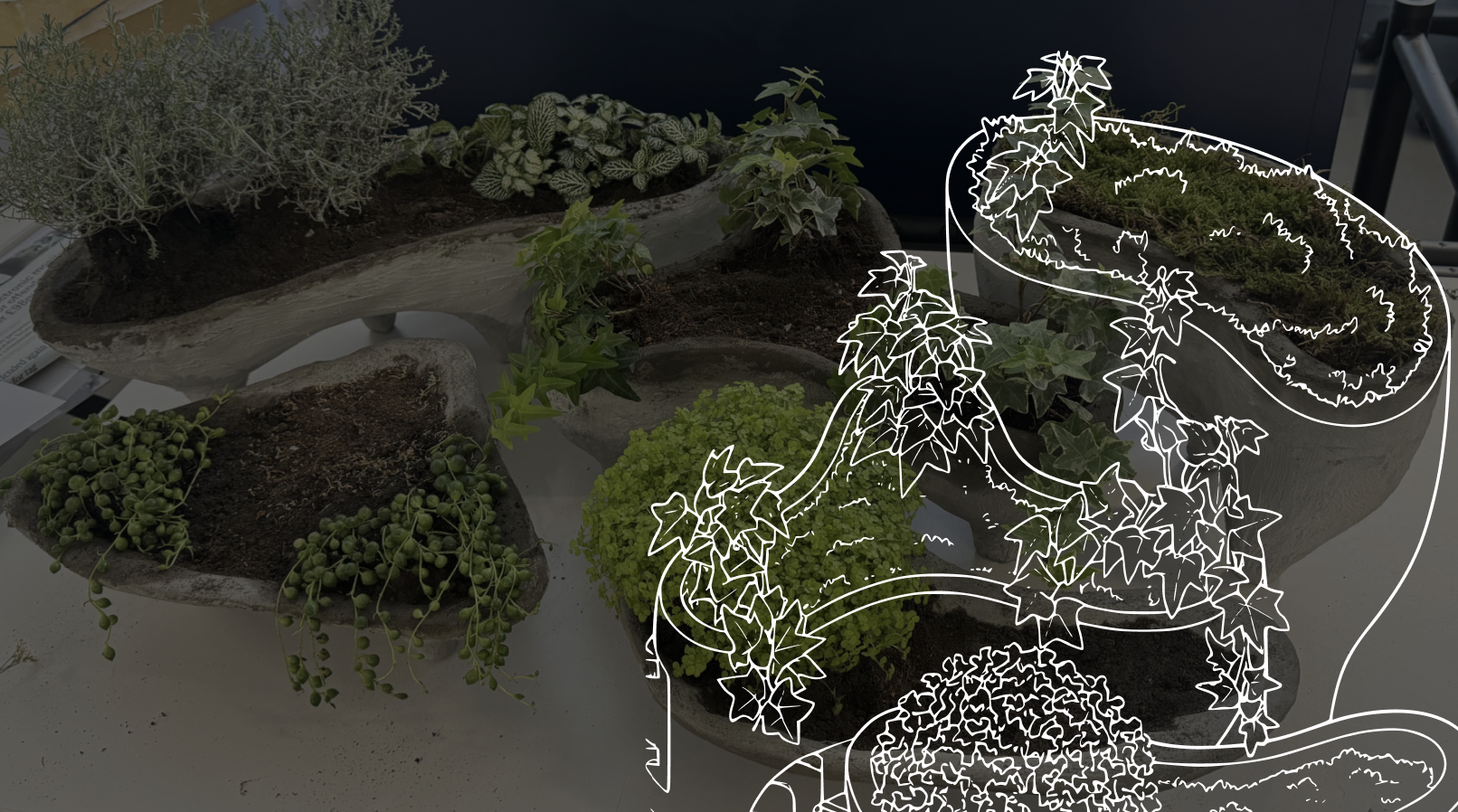

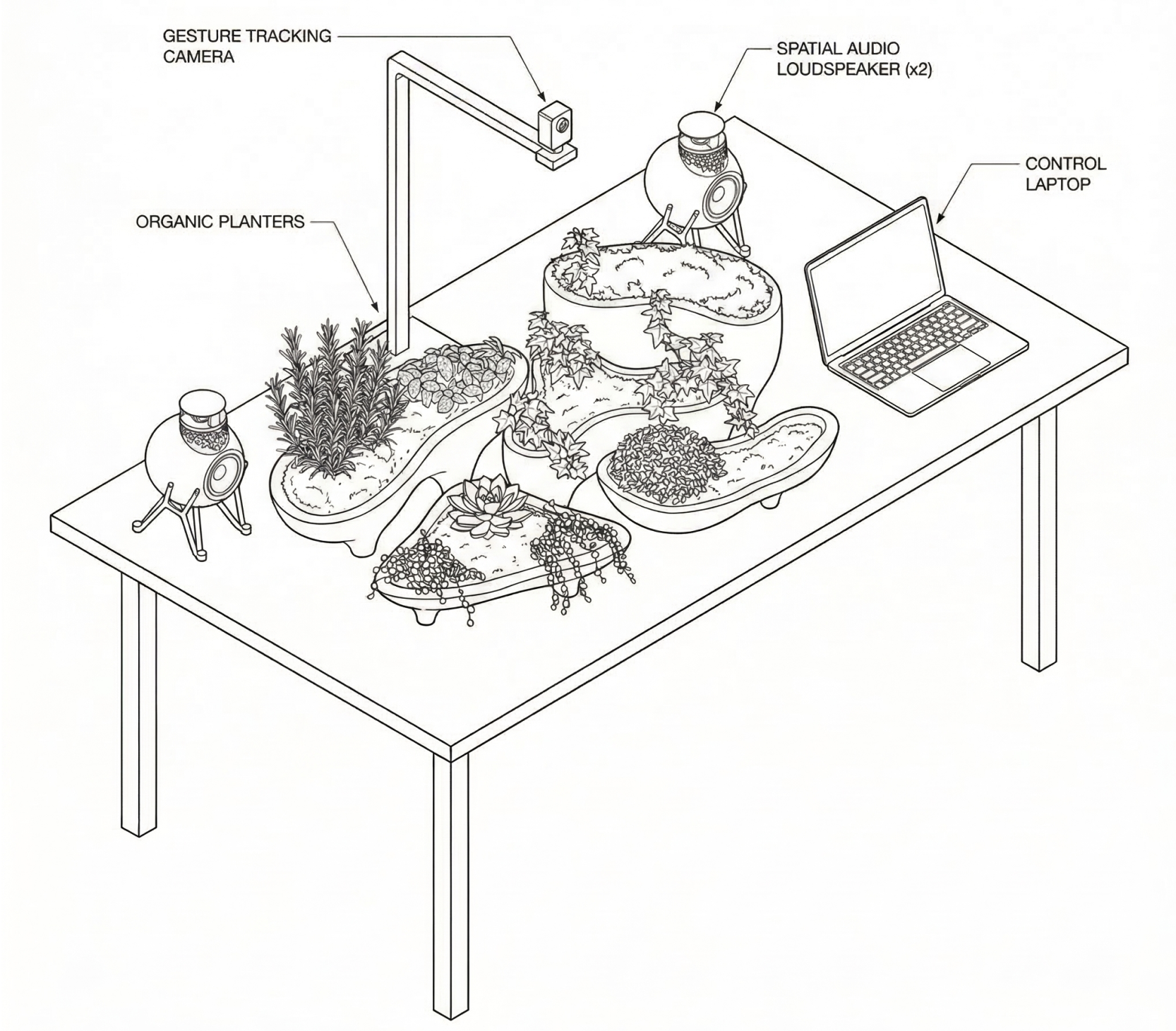

Iterative Development:Installation Design

The asymmetrical, curved surfaces were intentionally developed to diffuse sound, preventing harsh reflections while minimizing sound shadowing. By varying the heights and positions of each planter, the installation creates a consistent and immersive audio field—allowing sound to move freely between elements to reinforce a natural, distributed soundscape.

Iterative Development:3D Soundscape Design

Our goal was to move beyond the isolated, headphone-based experience to create a shared, externalized auditory space. By anchoring sound directly within the physical environment, the installation recreates how audio behaves and flows in nature.

Environmental Externalization: We opted for physical loudspeakers instead of headphones to achieve a stronger sense of externalization, allowing sound to be naturally distributed across the space like a physical forest.

Psychoacoustic Depth Mapping: To construct a perception of acoustic depth and layering, we applied distance perception principles (modulating lower amplitude and a higher proportion of reverberant energy to direct sound).

Audio-Visual Coherence: Two spatial speakers were strategically angled inward toward the interaction zone. Their heights were perfectly aligned with the planters, locking the sonic sweet spot with the physical elements to prevent any audio-visual mismatch.

Iterative Development:3D Spatial Audio

I developed a real-time spatial audio system in Max/MSP to precisely position multiple environmental sounds, applying psychoacoustic algorithms before migrating to Python for system stability.

Binaural to Stereo Mapping: I used amplitude panning and subtle channel delays (50ms) to simulate Interaural Level and Time Differences (ILD/ITD), giving each sound source a distinct horizontal position.

Multi-Source Signal Chains: Built independent processing chains for each botanical sound source, modulating gain, reverberation (AUReverb2), and filtering (svf~) to establish clear foreground and background relationships.

System Migration: Transitioned the entire spatialization framework from Max/MSP to Python to overcome scalability limits and ensure a robust, low-latency real-time installation.

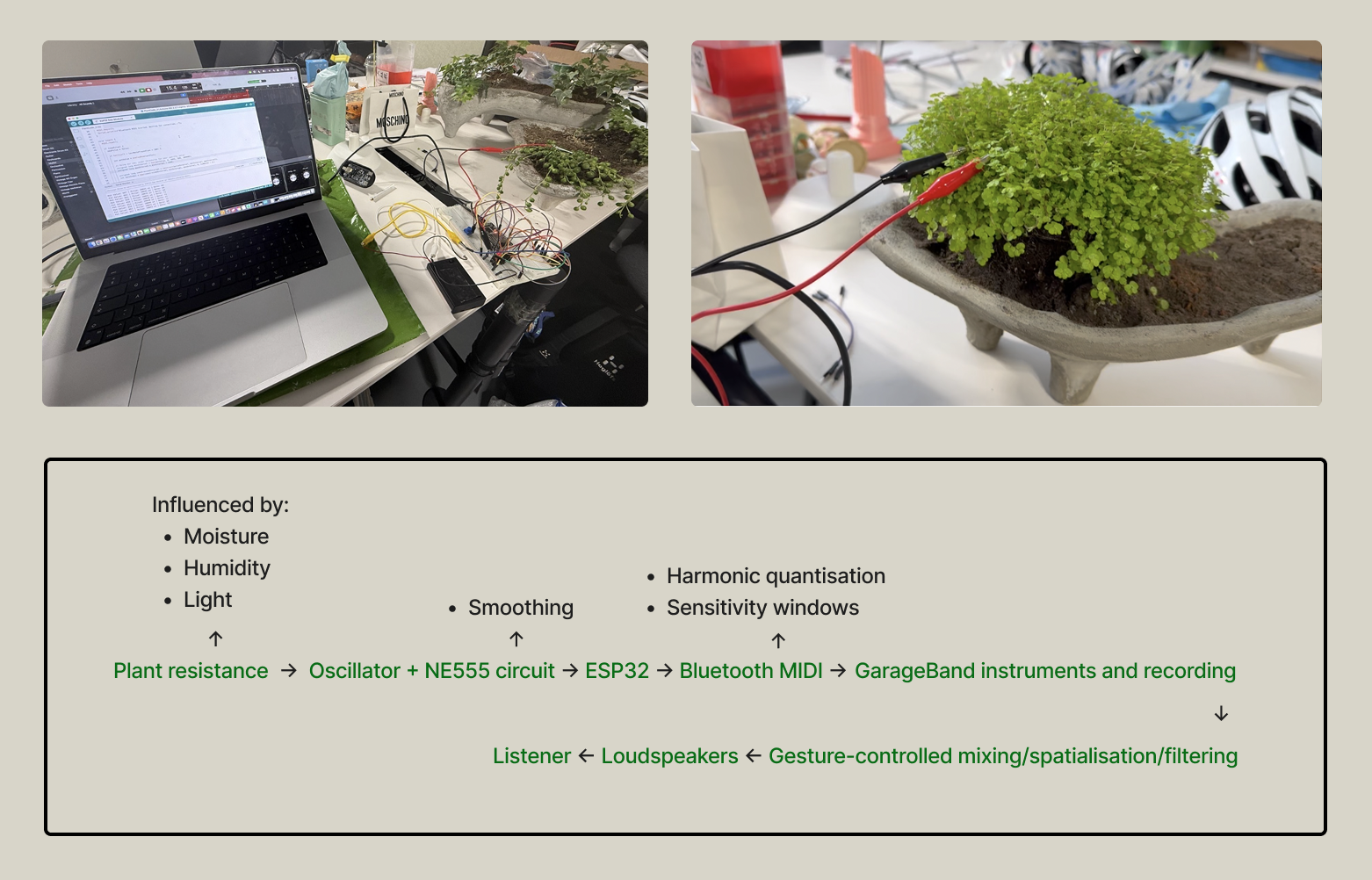

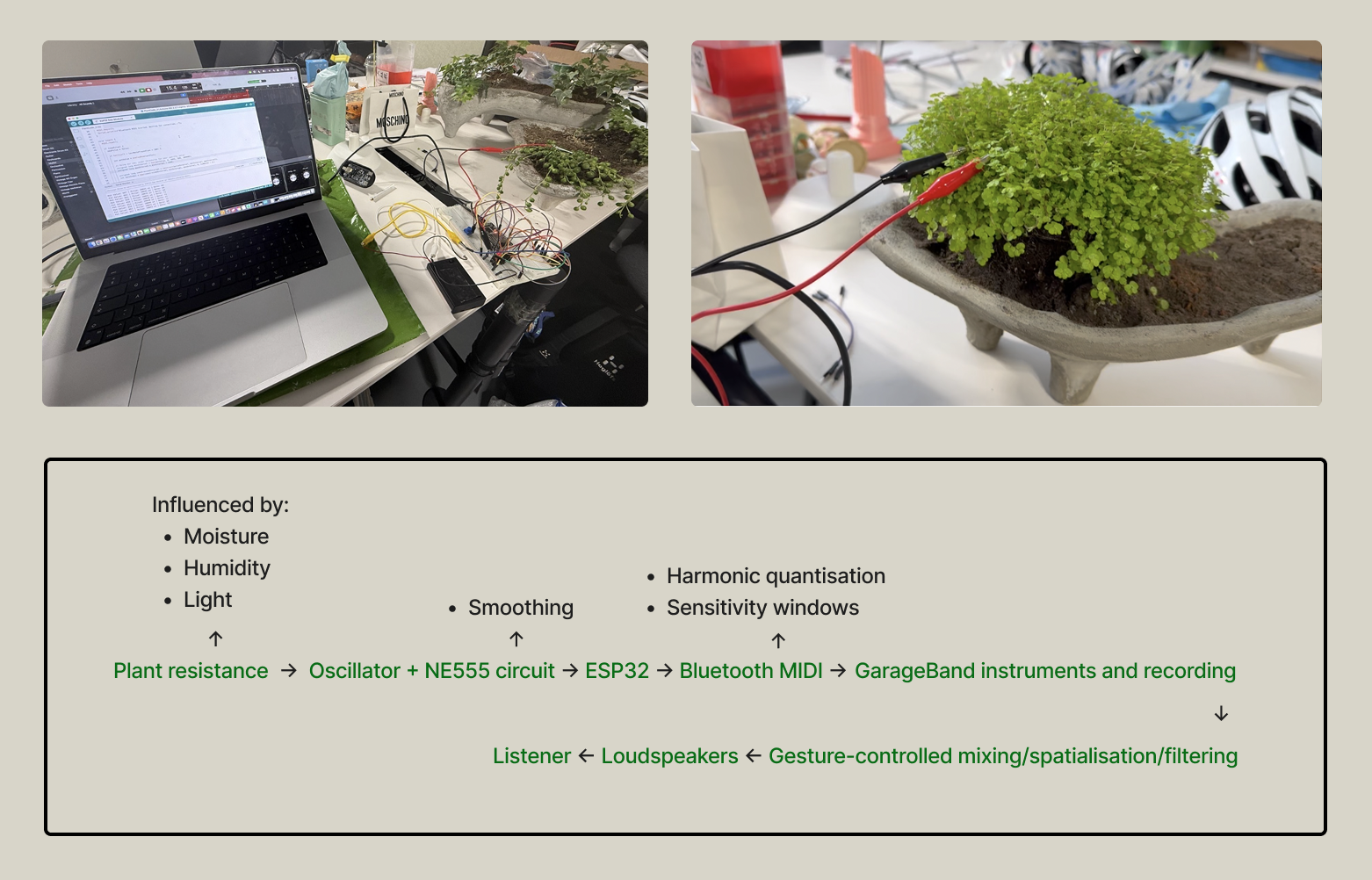

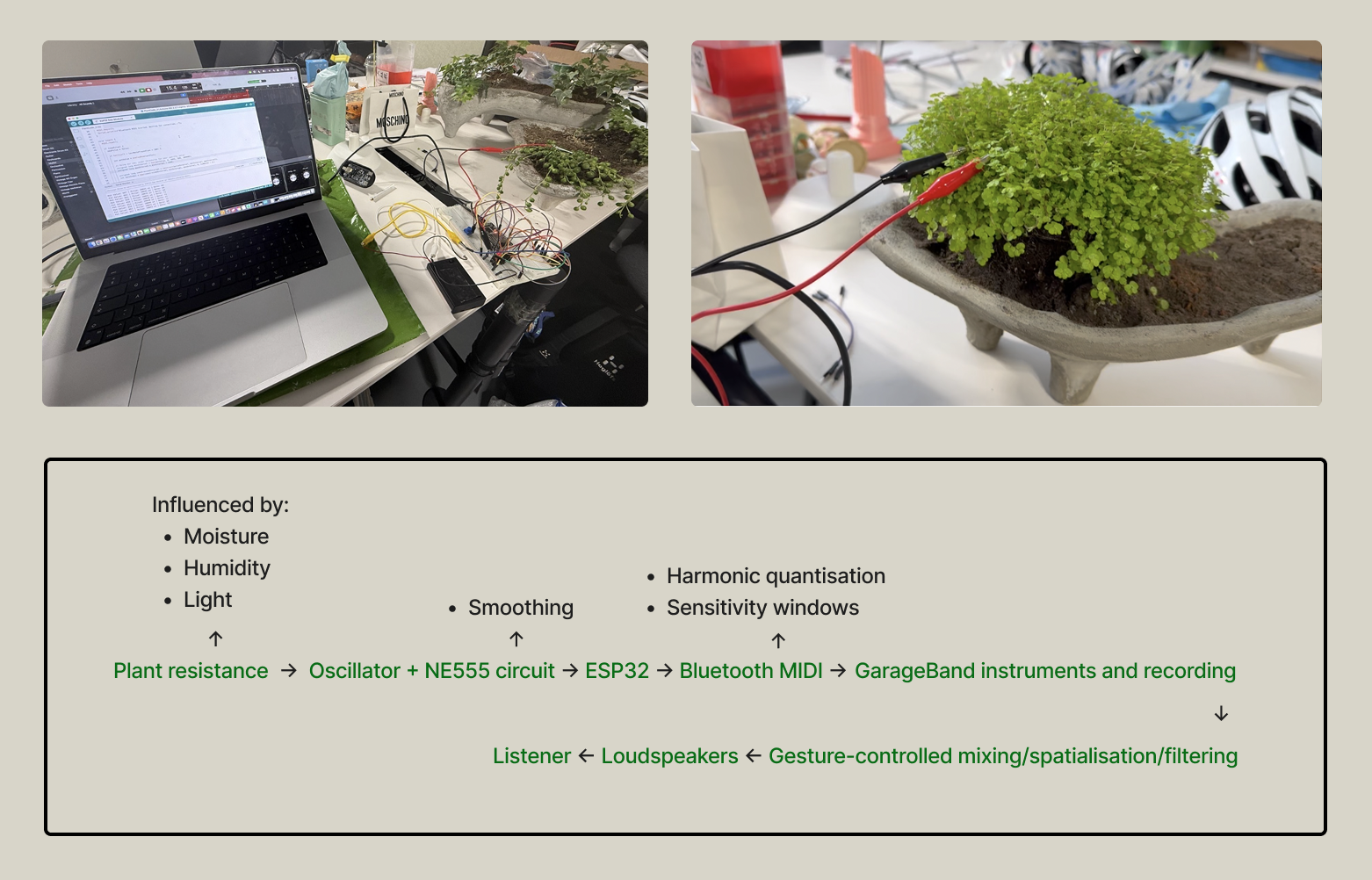

Iterative Development:Signal Processing

We developed a biofeedback system that translates the internal electrical fluctuations of living plants into ambient music, turning silent botanical processes into audible art.

Micro-Fluctuation Sensing: Biofeedback sensors capture micro-variations in the electrical resistance of the plant tissue.

Hardware Translation: A custom circuit and microcontroller convert these analog raw signals into digital BLE MIDI data.

Musical Mapping: The digital data is mapped to a specific musical scale, triggering software instruments in GarageBand to create cohesive ambient soundscapes.

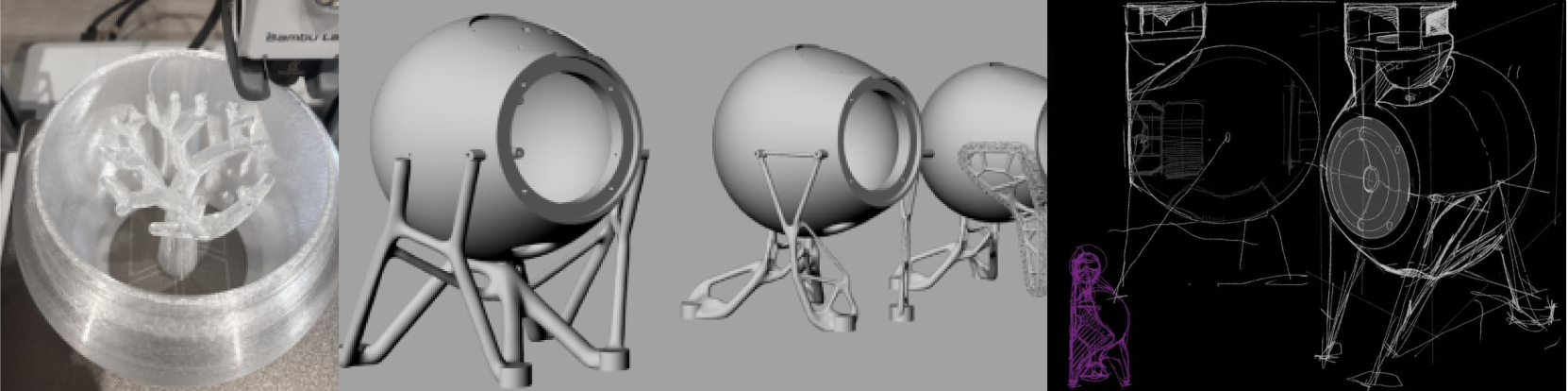

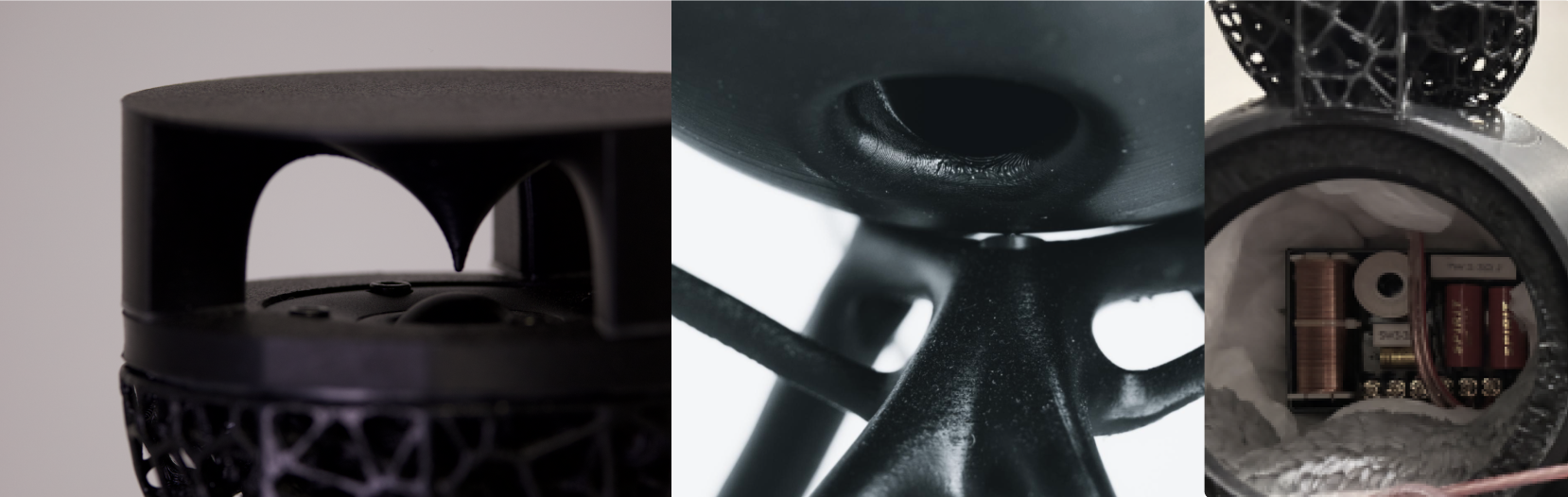

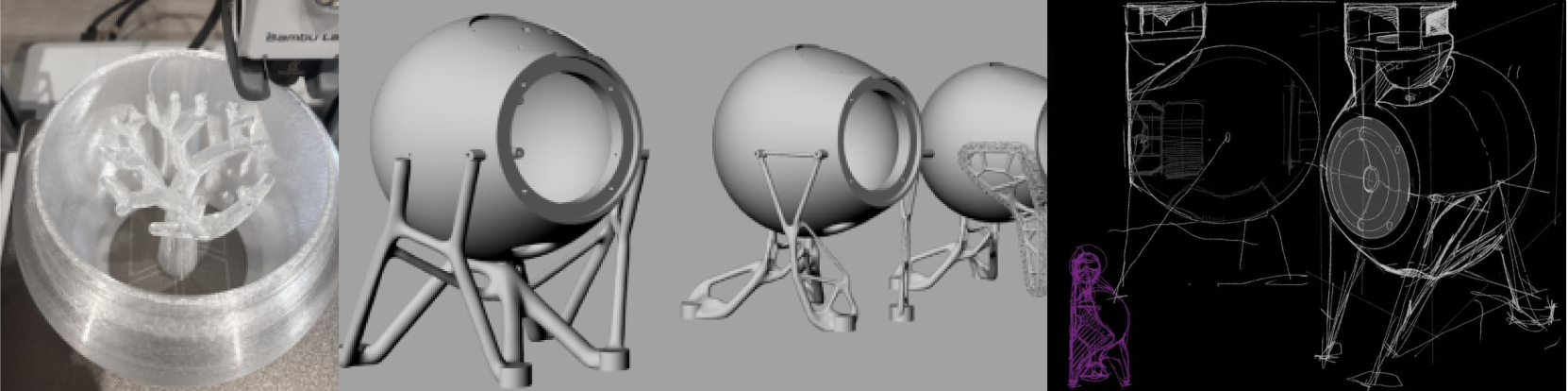

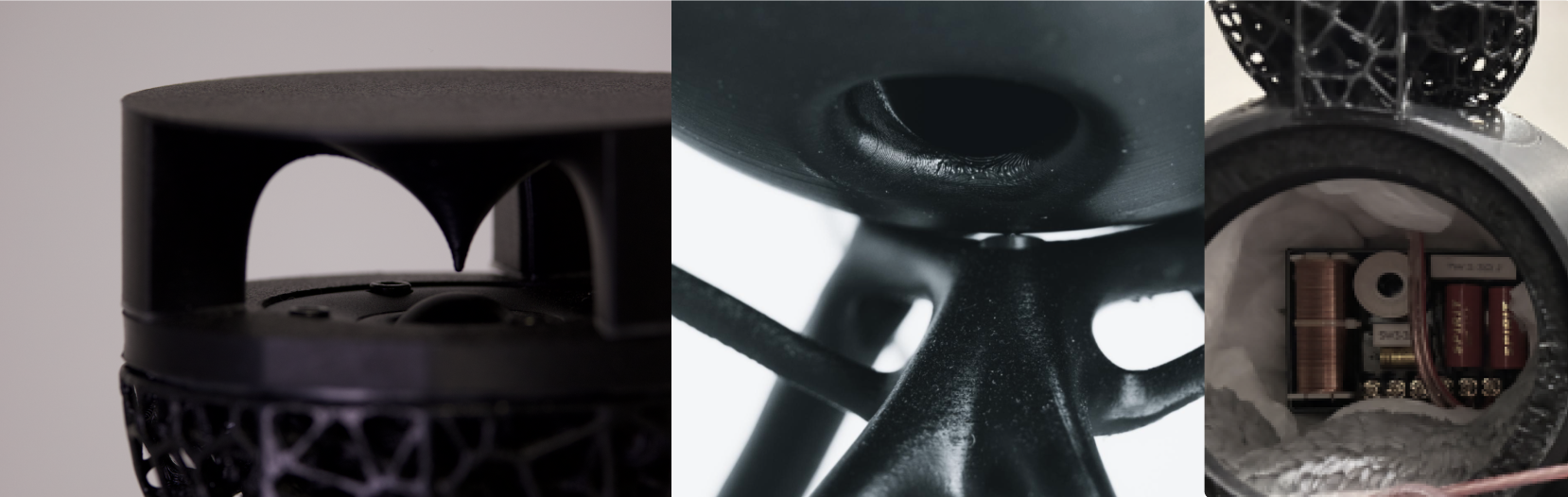

Iterative Development:Speaker Integration

To ensure precise spatial audio rendering, our installation integrated a custom-built, 2-way loudspeaker system developed by Xiangnan.

Enclosure Geometry: The speaker features a near-spherical geometry that reduces edge diffraction and internal standing waves, delivering a clean, uncoloured sound.

Omnidirectional Dispersion: A top reflector, modeled using an exponential curve formula, disperses directional high frequencies evenly to support natural sound propagation.

Acoustic Isolation: A bottom opening stabilizes the woofer's low-frequency response, while a multi-leg structure decouples the enclosure from the table to prevent unwanted resonance.

Frequency Crossover: An internal crossover network splits the signal at 2800 Hz, maintaining a smooth transition between the tweeter and mid-woofer for real-time interaction.

Final Outcome: The Root Orchestra Installation

The project followed an iterative, non-linear workflow where technical prototyping directly informed design refinements. This adaptive process allowed us to continuously resolve technical constraints and align conceptual goals with real-world execution.

Core Technologies Used:

Biodata Sonification: Custom biofeedback sensors capture micro-fluctuations in plant electrical resistance, converting raw signals into BLE MIDI data to trigger generative music in real time.

Computer Vision & Gesture Control: A Python-based hand-tracking system translates physical hand movements into intuitive audio parameter modulations.

3D Spatial Audio Engine: Built with psychoacoustic depth mapping, the system applies amplitude panning, interaural time/level differences (ILD/ITD), and air absorption filters to anchor sound naturally within the physical space.

Acoustic & Physical Design: The installation features asymmetrical, concrete-coated planters designed to diffuse sound, integrated with custom-built 2-way speakers featuring omnidirectional high-frequency reflectors to ensure a balanced and consistent soundscape.